Testing the Manifold Hypothesis

Undergrad Researcher: Adrian Lopez

Principal Investigator: Prof John Harer

High dimensional data is prevalent everywhere, with significant examples being present in speech, images, and text. Deep neural networks (DNNs) have recently been receiving a great deal of excitement due to breakthroughs in being able to “learn” high performing functions between high dimensional spaces such as in computer vision for image classification or natural language processing. The main theory surrounding its success stems from the manifold hypothesis in which it is believed that although the dataset lies in a high dimensional space, much of the relevant structure of the data (i.e. the degrees of freedom or support of the data) lies along a low dimensional manifold, and many experiments have been conducted suggesting this is the case. In this hypothesis, it is believed that the reason why DNNs are able to perform exceedingly well in creating functions between high dimensional spaces is in large part due to the hidden layers being able to “disentangle” highly tangled manifolds lying in input space to flattened manifolds in its hidden space (i.e. in its hidden layers).

The Quantum Schur Transform and its Applications

Undergrad Researcher: Joey Li

Principal Investigator: Prof Ezra Miller

The quantum Schur transform is a fundamental protocol in quantum information theory which performs a change of basis from a local, qudit-level description of a system to a global, symmetry-based representation. More formally, Schur-Weyl duality allows the simultaneous decomposition of n-fold tensor products of d-dimensional complex space into irreducible representations of the unitary and symmetric groups, and the Schur transform is the particular change of basis from our standard basis to the basis induced by these actions. In 2005, [1] introduced an efficient implementation of the quantum Schur transform, which allowed many quantum information protocols to become experimentally viable. In this paper, we review their work and implement the quantum Schur transform on IBM’s quantum computers. In addition, we study the use of the quantum Schur transform for the specific purpose of optimal qubit purification, as first outlined in [2].

Laplacian flow in the cohomogeneity-one setting

Undergrad Researcher: Anuk Dayaprema

Principal Investigators: Prof Mark Haskins, Prof Mark Stern

We say a three-form ϕ on a smooth 7-dimensional manifold M is a G2 structure if it satisfies a certain non-degeneracy condition. The existence of such a ϕ is indeed equivalent to the reduction of the structure group of the frame bundle of M to G2. In particular, ϕ gives rise to an orientation volϕ and a Riemannian metric gϕ, albeit nonlinearly. Of particular interest are G2 manifolds, which are manifolds with G2 structure whose Riemannian holonomy group is contained in G2. One can show that this is equivalent to the conditions dϕ = 0 and d∗ϕϕ = 0, and we say such structures are torsion-free. One idea to construct torsion-free G2 structures, which draws from geometric flows such as the Ricci flow, is to consider the Laplacian flow

(@/@t)ϕ = ∆ϕϕ.

Note that torsion-free G2 structures are critical points of the flow. Special self-similar solu-tions, called solitons, to the Laplacian flow for closed ϕ are given by triples (λ, X, ϕ), where λ ∈ R and X is a vector field, which satisfy

∆ϕϕ = λϕ + LXϕ.

Solitons give insight to singularities of the flow, which is why we consider them in this project.

Schur Polynomials and Crystal Graphs

Undergrad Researcher: Lucas Fagan

Principal Investigator: Prof Spencer Leslie

I examined the root system of GLn in order to understand and calculate the i-trails between Ai -> w0siAi. Dr. Leslie, along with work from Berenstein and Zelevinsky, has developed a formula to easily calculate monomials that are used for integrals in studying Mirkovic-Vilonen polytopes in a non-archimedean context. Specifically, I automated the process of calculating the i-trails and the corresponding monomials for GLn, and then calculated some of these MV integrals in order to study the behavior of these integrals off the polytope with the idea of resonance.

Invertible Neural Network for Power Amplifier Linearization

Undergrad Researcher: Andre Wang

Principal Investigators: Prof Vahid Tarokh, Yi Feng

We aim to use deep learning / reinforcement learning techniques to understand the non linear distortion to incoming signals caused by thermodynamics effect in power amplifiers. Power amplifiers are a critical component in communication systems. Previous research have delved into this area by creating mathematical and statistical models to understand this non linear distortion, and the standard the standard technique is to use a pre-distortion module before the power amplifier which can reverser (invert) the non linear effect of the power amplifier. Our aim is to improve this technique by using deep learning/reinforcement learning, and make it so that our proposed technique can be used to linearize a wide range of signals, and provide theoretical guarantees to our techniques, and finally potentially turn this into a commercially available product (by making the process faster and more efficient).

Policy Gradient Convergence in Discounted LQR

Undergrad Researcher: Craig Chen

Principal Investigator: Prof Andrea Agazzi

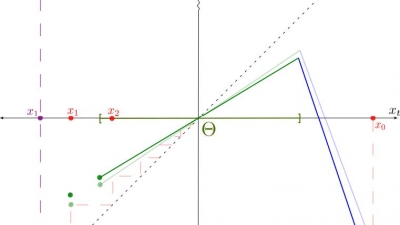

The aim of this paper is to provide a convergence guarantee for policy gradient algorithms in the setting of the Linear-Quadratic Regulator (LQR). Specifically, we show that any random initialization of a linear (In the state space) policy will converge to the global optimum of the undiscounted LQR in finite time. In related existing works, it is assumed that the initial policy is stabilizing; however, our result shows that — at the cost of more training iterations — it is possible to do away with this assumption. Additionally, we provide an example to show that a policy that is linear in the parameters does not converge in this setting.

Kynurenergic Treatments of Huntington's Disease - Mathematical Modeling Insights

Undergrad Researcher: Milov Kirill

Principal Investigator: Prof Mike Reed

In my research as a part of PRUV, I developed mathematical models of tryptophan metabolism in healthy subjects and Huntington’s Disease patients, using the work of Stavrum et al.[1], and applied these models to explore possible treatments of Huntington’s disease. The models are a set of differential equations, describing relationship between the rate of change of a metabolite along a metabolic pathway and linear combinations of velocities of reactions where this metabolite participates. The latter were approximated using equations from Michaelis-Menten kinetics and some similar expressions. I introduce multiple modifications such as different maximum velocities of certain reactions, separation of the branches of the pathway in different cell types, and excretion o fthe neuroactive metabolite kynurenic acid, to make sure the models fit the purpose, and represent the experimental data well. I proceed to test different treatment strategies that are based on curbing or increasing the activity of enzymes that play a role in tryptophan metabolism, and find the extent of alteration that could maximize the therapeutic benefits. I find that from multiple perspectives, blocking of the enzymes IDO or TDO would be the best therapeutic approach, although blocking of KMO, the most popular of the explored approaches, could fit the purpose as well. Manipulating TPH and KYNU, although unlikely to be effective by themselves, could achieve specific goals and be used in combination with the aforementioned treatments. 3HAA, as well as KAT, are likely to be less attractive therapeutic targets.